Gradient

@Gradient_HQ

Open infrastructure for open intelligence. Lattica · Parallax · Echo

73 Following 722.9K Followers

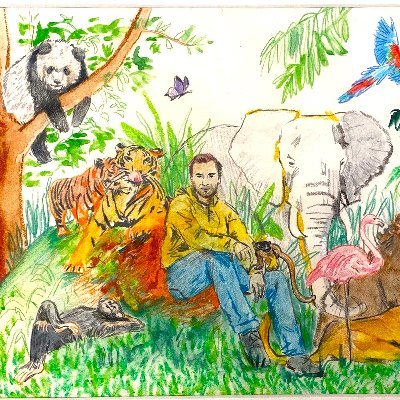

This GPT Image 2 prompt is going insanely viral right now.

“Redraw the attached image in the most clumsy, scribbly, and utterly pathetic way possible. Use a white background, and make it look like it was drawn in MS Paint with a mouse. It should be vaguely similar but also not really, kind of matching but also off in a confusing, awkward way, with that low-quality pixel-by-pixel feel that really emphasizes how ridiculously bad it is. Actually, you know what, whatever, just draw it however you want.”

Show more

We're hiring at Gradient.

Building open-source environment infrastructure for our distributed RL training stack — reproducible, scalable to thousand-GPU runs

Looking for 1–2 RL Environments engineers / tech leads: You've designed verifiers, built sandboxes for agentic RL rollouts, or shipped RL training data pipelines that survived contact with real training.

Domain depth in math, code, agent, tool, or GUI is a plus. PhD not required.

Also hiring research interns: PhD / Masters students with hands-on RLHF / RLVR / GRPO / DPO / agentic RL experience. Open-source footprint matters more than paper count. Most intern roles convert post-grad. No age cap. Founding-team-level equity for the right people.

DMs open.

Show more

Thrilled to see @tryParallax live in production on @Theta_Network.

This is exactly why @Gradient_HQ built Parallax: turning the world’s GPU mesh into a sovereign, distributed token factory.

Congrats on the milestone! 🫡

Show more

glad we could help!

with the agentic adoption soaring, privacy and token cost are already the top concerns for both agent and human users.

that's what parallax's built for.

Catch @alex_mirran on DevNTell this Friday.

He’ll break down the infrastructure we're building at Gradient and show you exactly how to get started today.

RSVP below👇

Ready to learn about the Open Intelligence Stack?

🎙️ This week on DevNTell, we'll be joined by @alex_mirran who is Head of BD at @Gradient_HQ, who'll be giving us an overview of the platform and more!

📅 April 17th

📋 RSVP today

Show more

When you scale parallel agents, prompt updates degrade fast. The more trajectories you process concurrently, the more generic your learned prompts become.

Our researchers worked with @lihanc02 and team on Combee to rethink how aggregation works at scale.

Results held up across GEPA and ACE even past 80 concurrent agents.

Read more on this research👇

Show more

Prompt Learning does not scale for parallel agents.

More parallel agents 🤖 = worse prompts 😭

Why? Processing too many trajectories concurrently damages the prompt update process

🐝 We fix this with Combee :

→ preserves high-quality learnt system prompt

→ scales to more than 80 concurrent agents

→ up to 17× speedup without quality drop on top of ACE and GEPA

🥽Use Cases:

1. Prompt learning on large scale collected agent traces

2. Parallel agent learning online with fast knowledge sharing

Read more below to learn how agents actually learn at scale ⬇️

Show more

If software no longer needs you to operate it, what does an “application” even mean?

That’s what we’re digging into at The Agentic Shift with panels, demos, and speakers from Google, PixVerse, MiniMax + more.

SF | Apr 8

Sign up here:

Show more

As fellow training nerds, UniPat AI’s approach caught our eyes.

Synthesizing future-event data to fix outcome bias and actually beat human prediction markets is defined something worth checking out!

Show more

Today we’re introducing Echo — our full-stack prediction intelligence system, which turns uncertainty🔮 into profit📈.

We Make Prediction General, Evaluable, Trainable and Profitable.

🌐Website:

Show more

Everyone's scrambling for more compute.

The harder problem is making distributed compute actually work for multi-agent systems.

That's what Echo-2 is built for.

The explosion of agentic AI has kicked off a mad rush for computing power, pushing central processing units back into hot demand

Benchmarks that test what models have memorized are saturating fast. ARC-AGI-3 is asking a harder question: can AI actually learn something new on the fly?

One direction we've been exploring: multi-agent orchestration. In our study, coordinating four frontier LLMs across multiple turns consistently matched or outperformed the strongest single model, even on tasks none of them could solve alone.

The gap between "best single model" and "best coordination of models" is where a lot of the real progress is hiding.

More on our multi-turn, multi-agent orchestration study:

Show more

Announcing ARC-AGI-3

The only unsaturated agentic intelligence benchmark in the world

Humans score 100%, AI <1%

This human-AI gap demonstrates we do not yet have AGI

Most benchmarks test what models already know, ARC-AGI-3 tests how they learn

Show more

Great to see multi-agent systems getting serious engineering attention.

One thing we think about a lot: as agents get more capable, the orchestration layer matters just as much as the models themselves.

Our work on Symphony explores what happens when you remove the central controller entirely and let agents coordinate across consumer hardware through decentralized task allocation and weighted voting.

We've achieved up to 41.6% accuracy gains over centralized frameworks, running on commodity GPUs with <5% orchestration overhead.

Find out more in our Symphony paper:

Show more

New on the Anthropic Engineering Blog:

How we use a multi-agent harness to push Claude further in frontend design and long-running autonomous software engineering.

Read more:

Show more

local ai has picked up fast since openclaw dropped.

with the latest wave of small capable models, more people are running serious workloads on their own hardware.

if you missed this good local ai tutorial from @yacinelearning or want a refresher on how distributed scheduling actually works under the hood, it's worth the rewatch over the weekend!

Show more

Dobby is a free elf now.

Open models, open orchestration, open compute. The agentic RL stack that used to live inside walled gardens just showed up on hardware you can order and frameworks you can fork.

No masters needed.

Show more

Thank you Jensen and NVIDIA! She’s a real beauty! I was told I’d be getting a secret gift, with a hint that it requires 20 amps. (So I knew it had to be good). She’ll make for a beautiful, spacious home for my Dobby the House Elf claw, among lots of other tinkering, thank you!!

Show more

Our GTC takeaway is clear: NVIDIA is betting hard on open.

- NemoClaw turns OpenClaw into enterprise infrastructure.

- Nemotron 4 will be open-sourced.

- Nemotron Coalition puts eight labs on a shared open frontier model.

This is what we've been building toward. Open infrastructure for open intelligence is the direction the biggest AI companies are taking.

Show more

some parallax dev lunch break fun:

- a macbook pro, a mac mini, some cables

- zero internet, zero cost

- openclaw running on parallax

no subs. no token burn. nothing leaves the desk.

just local agents vibing.

Show more

Big shoutout to the Messari team for the deep dive into Echo-2 and the Open Intelligence Stack.

10.6x cheaper. Same model quality.

Echo-2 turns hardware volatility into a feature, not a bug.

Logits waitlist is open for researchers.

Show more

They crashed. They fell. They exploded on the pad.

Then they got back up. Faster, wiser, stronger.

Breakthroughs don't come from one perfect run, they come from the freedom to fail 100 times.

Introducing Echo-2, distributed RL that boosts AI research throughput by 10x.

Show more

Zero code = Zero excuses.

This is how we bring the future of AI a claw🦞closer to everyone.

Secure your spot now👇

Open consumer AI apps should run on open infra.

We’re proud to partner with @myshell_ai to bring verifiable, high-throughput inference to the creator economy.

Creators shouldn't have to worry about latency or trust. We handle the groundwork; MyShell handles the charm.

Show more